OmniHuman-1 is a self-developed multimodal video generation AI model by ByteDance. It can generate highly realistic and synchronized animated videos from a single image and an audio track. Below are detailed explanations of its core features and application scenarios:

Multimodal Input and Generation:

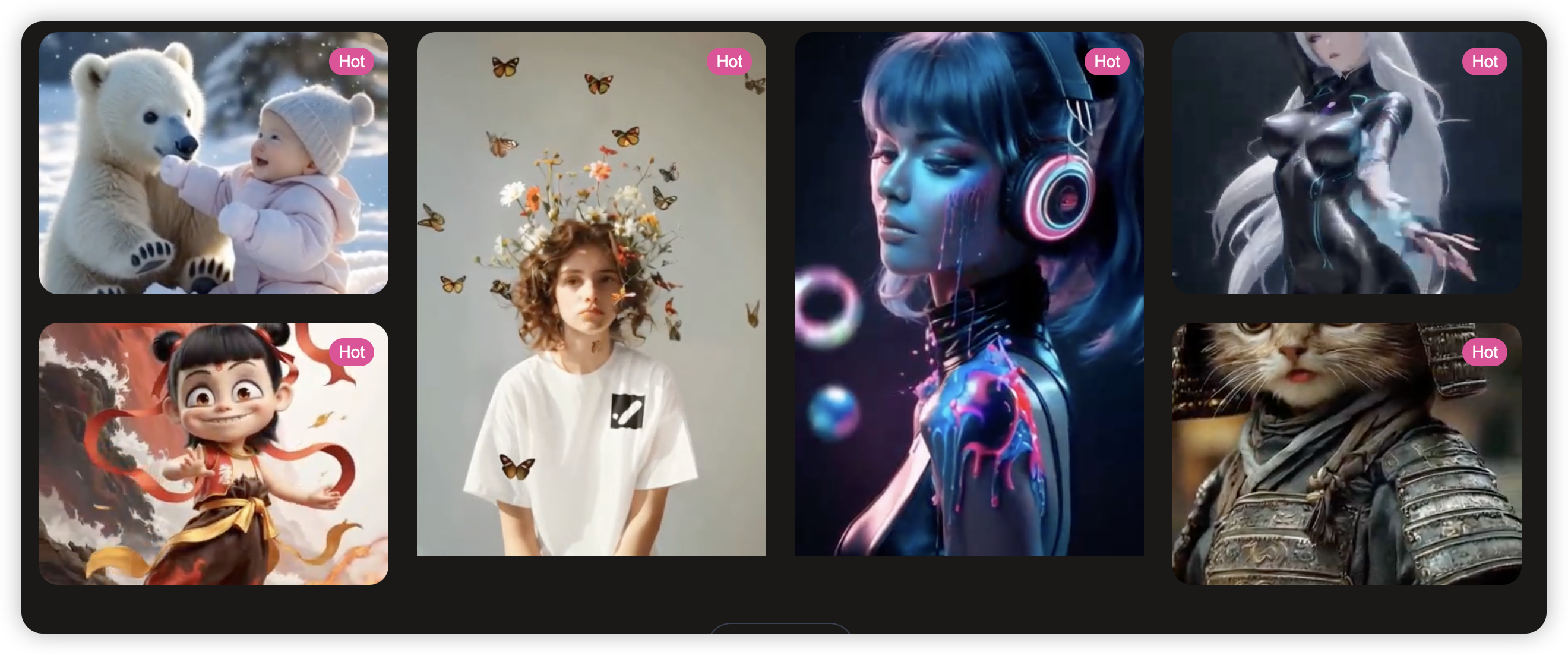

- Supports a combination of single images (including real humans, anime, 3D cartoons, etc.) and audio (such as speech or music) to generate video animations that include facial expressions, gestures, and full-body movements. For example, inputting a photo of Einstein along with a speech audio track can generate a video of him giving a lecture.

- High-Precision Synchronization: The model achieves extremely high matching accuracy between speech and lip movements, as well as body actions. It even supports lip-syncing from side views, which is a first in similar tools.

- Diverse Style Support: In addition to real humans, it can generate dynamic videos of anime characters, animal figures, and more while maintaining the original style and movement patterns.

- Large-Scale Data Training: Trained on approximately 19,000 hours of human motion data, the model can generate videos of any length and adapt to different input signals.

- Application Scenarios

- Film and Advertising Production: Quickly generate advertising clips and virtual character performances, such as beauty product demonstrations and music MVs, significantly reducing traditional filming costs.

- Education and Entertainment: Create content like historical figure speeches and virtual idol performances, for example, the "talking Albert Einstein" in the demo video.

- Animation Creation: Automatically generate expressions and movements for anime characters, simplifying the animation production process.

- Technical Advantages and Limitations

- Advantages: Compared to traditional deepfake technologies, OmniHuman-1 can generate full-body animations with higher realism and accuracy. For example, the generated videos excel in details such as gestures and object interactions.

- Limitations: Currently, the model is not publicly available for download and is only undergoing limited internal testing by ByteDance. Generating film-level videos still requires optimization.

- Safety and Ethical Measures ByteDance has implemented strict security review mechanisms to prevent misuse of the technology. All output videos are watermarked to identify AI-generated content.

- Industry Impact OmniHuman-1 is seen as a significant breakthrough in AI-generated video technology, potentially disrupting industries such as advertising and film production. For example, extras in future movies or animation production could be replaced by AI, significantly reducing labor costs. Additionally, its lightweight version, the Goku model (with 8 billion parameters), has already targeted the advertising market, demonstrating the diversity of the technology's applications.