While startups are riding the wave of AI, leading internet companies are also making significant strides in the AI race! On February 6, ByteDance's digital human team launched a new multimodal digital human solution called OmniHuman, which can generate vivid and highly natural videos from a single image of any size and character proportion combined with an input audio.

ByteDance has introduced a brand-new AI digital human model.

Researchers at ByteDance have developed an AI model named OmniHuman-1, capable of generating realistic full-body dynamic videos from a single image, with stunning results.

The model can produce natural videos of humans talking, singing, and moving by combining a single image with audio or video, maintaining an extremely high level of realism. It accurately captures details such as facial expressions, body movements, hand gestures, and object interactions.

OmniHuman supports various types of inputs (such as a single character image, audio, or video signals) to generate highly realistic human video animations, covering everything from facial expressions to full-body movements, including speaking, singing, and dancing. This surpasses previous AI models that could only animate faces or upper bodies.

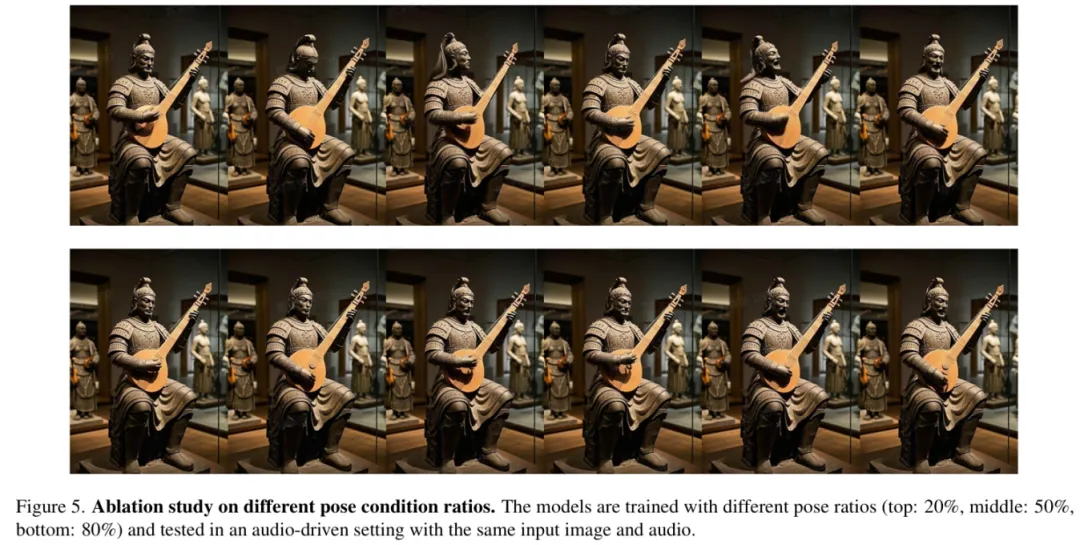

It is reported that the model adopts a multimodal motion-conditioned hybrid training strategy based on the DiT architecture, addressing the scarcity of high-quality data. The core of this technology lies in its combination of text, audio, and human motion inputs, using an innovative "full-conditioning" training method that allows the AI to learn from larger and richer datasets.

According to evaluation results, OmniHuman's algorithm demonstrates significant advantages over multiple existing models in various metrics.

The research team noted that OmniHuman has been trained on over 18,700 hours of human video data, showing remarkable progress. By introducing multiple conditional signals (such as text, audio, and poses), this technology not only improves the quality of video generation but also effectively reduces data waste.

"OmniHuman has successfully addressed long-standing issues in human animation generation, such as data scalability and generalization capabilities, by introducing multimodal conditional driving and full-conditioning training strategies. This development comes amid increasingly fierce competition in AI video generation technology, with companies like Google, Meta, and Microsoft actively pursuing similar technologies," industry insiders pointed out.

Digital Human Market Expected to Reach 10 Billion Yuan Next Year

Currently, the global digital human industry is entering a high-output era, with the related industry scale expanding rapidly. I

nternet giants are actively entering the field. In addition to companies like Baidu, Tencent, and Alibaba, Huawei Cloud, JD Cloud, ByteDance, iFlytek, SenseTime, and Xiaoice are also participating in virtual digital human production.

Data from Tianyancha shows that as of the end of September 2024, the number of companies related to digital humans in China has reached 1.144 million, with over 174,000 new registrations in the first five months of 2024 alone, demonstrating the market potential and vitality of the digital human industry.

According to Zheshang Securities, digital humans are expected to become the service entry point for AI large models, helping enterprises reduce costs and increase efficiency while achieving a closed loop of toB services monetizing through toC channels.

A recent IDC report indicates that China's virtual digital human market is experiencing rapid growth and is expected to reach 10.24 billion yuan by 2026. Zhiyan Consulting believes that as AI technology continues to advance, intelligent-driven virtual digital humans will become the market mainstream. The core feature and competitiveness of virtual digital humans lie in their degree of human-likeness. Virtual digital humans include real-person-driven and AI-driven types. While real-person-driven digital humans still rely on real humans for motion capture, audio-visual synthesis, and other tasks, achieving a higher degree of human-likeness, AI-driven digital humans are currently limited by technology and equipment, making them less realistic than real-person-driven ones.

In the future, with the continuous development and breakthroughs in AI technologies such as natural language processing and deep learning algorithms, the perceptual, expressive, and cognitive abilities of AI-driven digital humans will be significantly enhanced, and costs will further decline.

As the performance and cost advantages become more apparent, AI-driven digital humans capable of self-awareness and evolution will gradually replace real-person-driven digital humans, becoming the market mainstream and being widely applied across various fields. The rise of AIGC technology will further enhance the personalized customization and intelligent interaction capabilities of AI-driven digital humans.